This put up is co-written with HyeKyung Yang, Jieun Lim, and SeungBum Shim from LotteON.

LotteON goals to be a platform that not solely sells merchandise, but in addition offers a personalised suggestion expertise tailor-made to your most well-liked life-style. LotteON operates numerous specialty shops, together with style, magnificence, luxurious, and youngsters, and strives to supply a personalised buying expertise throughout all features of shoppers’ life.

To boost the buying expertise of LotteON’s clients, the advice service improvement crew is repeatedly enhancing the advice service to supply clients with the merchandise they’re in search of or could also be thinking about on the proper time.

On this put up, we share how LotteON improved their suggestion service utilizing Amazon SageMaker and machine studying operations (MLOps).

Downside definition

Historically, the advice service was primarily supplied by figuring out the connection between merchandise and offering merchandise that have been extremely related to the product chosen by the client. Nevertheless, it was essential to improve the advice service to investigate every buyer’s style and meet their wants. Due to this fact, we determined to introduce a deep learning-based suggestion algorithm that may determine not solely linear relationships within the knowledge, but in addition extra complicated relationships. Because of this, we constructed the MLOps structure to handle the created fashions and supply real-time providers.

One other requirement was to construct a steady integration and steady supply (CI/CD) pipeline that may be built-in with GitLab, a code repository utilized by present suggestion platforms, so as to add newly developed suggestion fashions and create a construction that may repeatedly enhance the standard of advice providers by way of periodic retraining and redistribution of fashions.

Within the following sections, we introduce the MLOps platform that we constructed to supply high-quality suggestions to our clients and the general means of inferring a deep learning-based suggestion algorithm (Neural Collaborative Filtering) in actual time and introducing it to LotteON.

Answer structure

The next diagram illustrates the answer structure for serving Neural Collaborative Filtering (NCF) algorithm-based suggestion fashions as MLOps. The principle AWS providers used are SageMaker, Amazon EMR, AWS CodeBuild, Amazon Easy Storage Service (Amazon S3), Amazon EventBridge, AWS Lambda, and Amazon API Gateway. We’ve mixed a number of AWS providers utilizing Amazon SageMaker Pipelines and designed the structure with the next parts in thoughts:

Information preprocessing

Automated mannequin coaching and deployment

Actual-time inference by way of mannequin serving

CI/CD construction

The previous structure exhibits the MLOps knowledge movement, which consists of three decoupled passes:

Code preparation and knowledge preprocessing (blue)

Coaching pipeline and mannequin deployment (inexperienced)

Actual-time suggestion inference (brown)

Code preparation and knowledge preprocessing

The preparation and preprocessing section consists of the next steps:

The information scientist publishes the deployment code containing the mannequin and the coaching pipeline to GitLab, which is utilized by LotteON, and Jenkins uploads the code to Amazon S3.

The EMR preprocessing batch runs by way of Airflow in keeping with the desired schedule. The preprocessing knowledge is loaded into MongoDB, which is used as a function retailer together with Amazon S3.

Coaching pipeline and mannequin deployment

The mannequin coaching and deployment section consists of the next steps:

After the coaching knowledge is uploaded to Amazon S3, CodeBuild runs primarily based on the foundations laid out in EventBridge.

The SageMaker pipeline predefined in CodeBuild runs, and sequentially runs steps akin to preprocessing together with provisioning, mannequin coaching, and mannequin registration.

When coaching is full (by way of the Lambda step), the deployed mannequin is up to date to the SageMaker endpoint.

Actual-time suggestion inference

The inference section consists of the next steps:

The consumer utility makes an inference request to the API gateway.

The API gateway sends the request to Lambda, which makes an inference request to the mannequin within the SageMaker endpoint to request an inventory of suggestions.

Lambda receives the record of suggestions and offers them to the API gateway.

The API gateway offers the record of suggestions to the consumer utility utilizing the Advice API.

Advice mannequin utilizing NCF

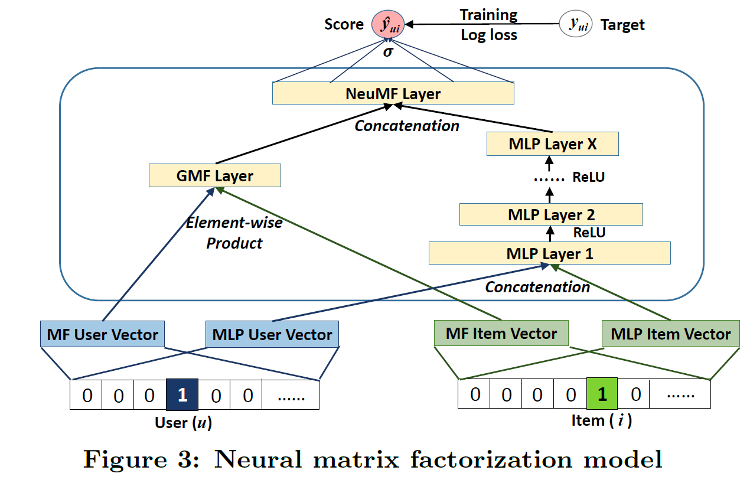

NCF is an algorithm primarily based on a paper introduced on the Worldwide World Extensive Internet Convention in 2017. It’s an algorithm that covers the restrictions of linear matrix factorization, which is usually utilized in present suggestion programs, with collaborative filtering primarily based on the neural web. By including non-linearity by way of the neural web, the authors have been capable of mannequin a extra complicated relationship between customers and gadgets. The information for NCF is interplay knowledge the place customers react to gadgets, and the general construction of the mannequin is proven within the following determine (supply: https://arxiv.org/abs/1708.05031).

Though NCF has a easy mannequin structure, it has proven efficiency, which is why we selected it to be the prototype for our MLOps platform. For extra details about the mannequin, confer with the paper Neural Collaborative Filtering.

Within the following sections, we focus on how this resolution helped us construct the aforementioned MLOps parts:

Information preprocessing

Automating mannequin coaching and deployment

Actual-time inference by way of mannequin serving

CI/CD construction

MLOps part 1: Information preprocessing

For NCF, we used user-item interplay knowledge, which requires vital sources to course of the uncooked knowledge collected on the utility and rework it right into a kind appropriate for studying. With Amazon EMR, which offers absolutely managed environments like Apache Hadoop and Spark, we have been capable of course of knowledge sooner.

The information preprocessing batches have been created by writing a shell script to run Amazon EMR by way of AWS Command Line Interface (AWS CLI) instructions, which we registered to Airflow to run at particular intervals. When the preprocessing batch was full, the coaching/take a look at knowledge wanted for coaching was partitioned primarily based on runtime and saved in Amazon S3. The next is an instance of the AWS CLI command to run Amazon EMR:

MLOps part 2: Automated coaching and deployment of fashions

On this part, we focus on the parts of the mannequin coaching and deployment pipeline.

Occasion-based pipeline automation

After the preprocessing batch was full and the coaching/take a look at knowledge was saved in Amazon S3, this occasion invoked CodeBuild and ran the coaching pipeline in SageMaker. Within the course of, the model of the outcome file of the preprocessing batch was recorded, enabling dynamic management of the model and administration of the pipeline run historical past. We used EventBridge, Lambda, and CodeBuild to attach the info preprocessing steps run by Amazon EMR and the SageMaker studying pipeline on an event-based foundation.

EventBridge is a serverless service that implements guidelines to obtain occasions and direct them to locations, primarily based on the occasion patterns and locations you determine. The preliminary function of EventBridge in our configuration was to invoke a Lambda operate on the S3 object creation occasion when the preprocessing batch saved the coaching dataset in Amazon S3. The Lambda operate dynamically modified the buildspec.yml file, which is indispensable when CodeBuild runs. These modifications encompassed the trail, model, and partition info of the info that wanted coaching, which is essential for finishing up the coaching pipeline. The next function of EventBridge was to dispatch occasions, instigated by the alteration of the buildspec.yml file, resulting in working CodeBuild.

CodeBuild was accountable for constructing the supply code the place the SageMaker pipeline was outlined. All through this course of, it referred to the buildspec.yml file and ran processes akin to cloning the supply code and putting in the libraries wanted to construct from the trail outlined within the file. The Mission Construct tab on the CodeBuild console allowed us to overview the construct’s success and failure historical past, together with a real-time log of the SageMaker pipeline’s efficiency.

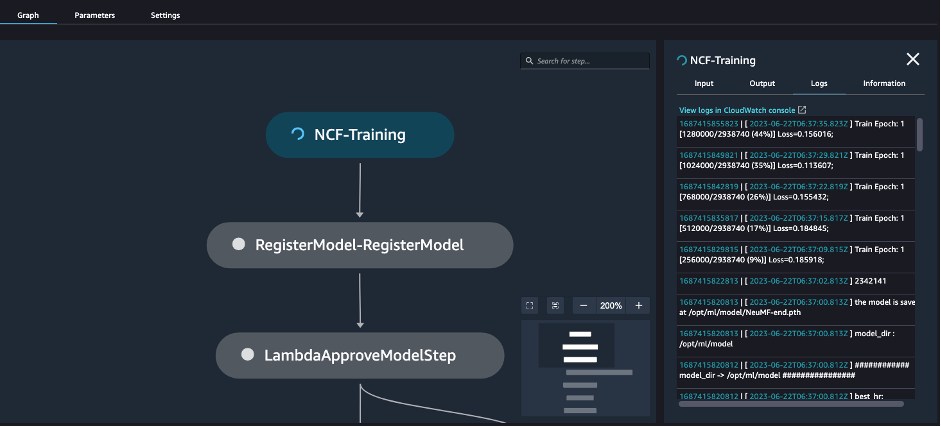

SageMaker pipeline for coaching

SageMaker Pipelines helps you outline the steps required for ML providers, akin to preprocessing, coaching, and deployment, utilizing the SDK. Every step is visualized inside SageMaker Studio, which may be very useful for managing fashions, and you may also handle the historical past of educated fashions and endpoints that may serve the fashions. You may as well arrange steps by attaching conditional statements to the outcomes of the steps, so you possibly can undertake solely fashions with good retraining outcomes or put together for studying failures. Our pipeline contained the next high-level steps:

Mannequin coaching

Mannequin registration

Mannequin creation

Mannequin deployment

Every step is visualized within the pipeline in Amazon SageMaker Studio, and you may also see the outcomes or progress of every step in actual time, as proven within the following screenshot.

Let’s stroll by way of the steps from mannequin coaching to deployment, utilizing some code examples.

Prepare the mannequin

First, you outline a PyTorch Estimator to make use of for coaching and a coaching step. This requires you to have the coaching code (for instance, prepare.py) prepared prematurely and go the situation of the code as an argument of the source_dir. The coaching step runs the coaching code you go as an argument of the entry_point. By default, the coaching is finished by launching the container within the occasion you specify, so that you’ll have to go within the path to the coaching Docker picture for the coaching atmosphere you’ve developed. Nevertheless, in the event you specify the framework in your estimator right here, you possibly can go within the model of the framework and Python model to make use of, and it’ll mechanically fetch the version-appropriate container picture from Amazon ECR.

Whenever you’re accomplished defining your PyTorch Estimator, you could outline the steps concerned in coaching it. You are able to do this by passing the PyTorch Estimator you outlined earlier as an argument and the situation of the enter knowledge. Whenever you go within the location of the enter knowledge, the SageMaker coaching job will obtain the prepare and take a look at knowledge to a particular path within the container utilizing the format /decide/ml/enter/knowledge/<channel_name> (for instance, /decide/ml/enter/knowledge/prepare).

As well as, when defining a PyTorch Estimator, you should utilize metric definitions to observe the training metrics generated whereas the mannequin is being educated with Amazon CloudWatch. You may as well specify the trail the place the outcomes of the mannequin artifacts after coaching are saved by specifying estimator_output_path, and you should utilize the parameters required for mannequin coaching by specifying model_hyperparameters. See the next code:

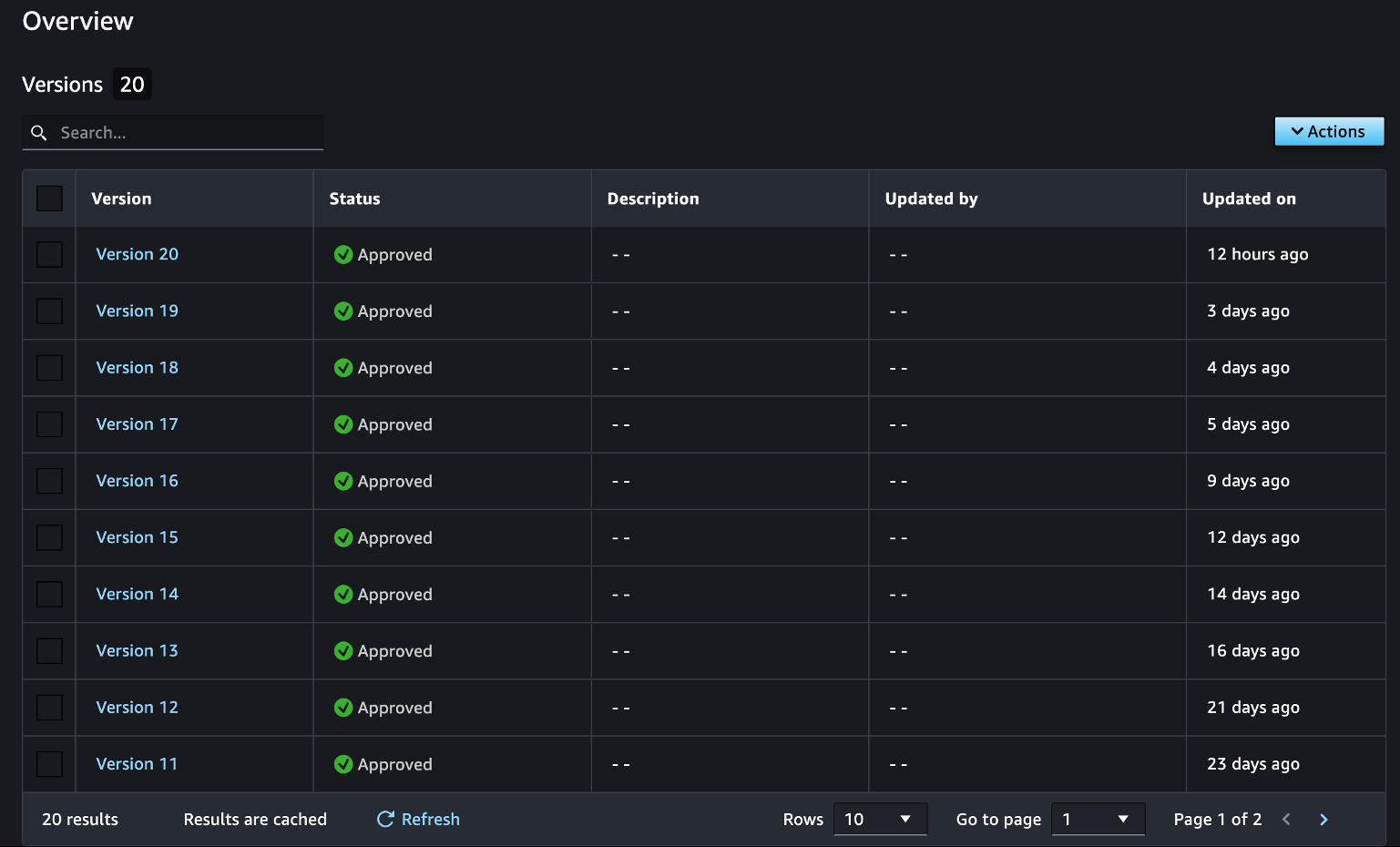

Create a mannequin bundle group

The following step is to create a mannequin bundle group to handle your educated fashions. By registering educated fashions in mannequin packages, you possibly can handle them by model, as proven within the following screenshot. This info lets you reference earlier variations of your fashions at any time. This course of solely must be accomplished one time whenever you first prepare a mannequin, and you may proceed so as to add and replace fashions so long as they declare the identical group identify.

See the next code:

Add a educated mannequin to a mannequin bundle group

The following step is so as to add a educated mannequin to the mannequin bundle group you created. Within the following code, whenever you declare the Mannequin class, you get the results of the earlier mannequin coaching step, which creates a dependency between the steps. A step with a declared dependency can solely be run if the earlier step succeeds. Nevertheless, you should utilize the DependsOn choice to declare a dependency between steps even when the info shouldn’t be causally associated.

After the educated mannequin is registered within the mannequin bundle group, you should utilize this info to handle and monitor future mannequin variations, create a real-time SageMaker endpoint, run a batch rework job, and extra.

Create a SageMaker mannequin

To create a real-time endpoint, an endpoint configuration and mannequin is required. To create a mannequin, you want two primary parts: an S3 deal with the place the mannequin’s artifacts are saved, and the trail to the inference Docker picture that can run the mannequin’s artifacts.

When making a SageMaker mannequin, you could take note of the next steps:

Present the results of the mannequin coaching step, step_train.properties.ModelArtifacts.S3ModelArtifacts, which will likely be transformed to the S3 path the place the mannequin artifact is saved, as an argument of the model_data.

Since you specified the PyTorchModel class, framework_version, and py_version, you employ this info to get the trail to the inference Docker picture by way of Amazon ECR. That is the inference Docker picture that’s used for mannequin deployment. Be sure to enter the identical PyTorch framework, Python model, and different particulars that you simply used to coach the mannequin. This implies retaining the identical PyTorch and Python variations for coaching and inference.

Present the inference.py because the entry level script to deal with invocations.

This step will set a dependency on the mannequin bundle registration step you outlined by way of the DependsOn choice.

Create a SageMaker endpoint

Now you could outline an endpoint configuration primarily based on the created mannequin, which can create an endpoint when deployed. As a result of the SageMaker Python SDK doesn’t assist the step associated to deployment (as of this writing), you should utilize Lambda to register that step. Move the required arguments to Lambda, akin to instance_type, and use that info to create the endpoint configuration first. Since you’re calling the endpoint primarily based on endpoint_name, you could ensure that variable is outlined with a singular identify. Within the following Lambda operate code, primarily based on the endpoint_name, you replace the mannequin if the endpoint exists, and deploy a brand new one if it doesn’t:

To get the Lambda operate right into a step within the SageMaker pipeline, you should utilize the SDK related to the Lambda operate. By passing the situation of the Lambda operate supply as an argument of the operate, you possibly can mechanically register and use the operate. Along with this, you possibly can outline LambdaStep and go it the required arguments. See the next code:

Create a SageMaker pipeline

Now you possibly can create a pipeline utilizing the steps you outlined. You are able to do this by defining a reputation for the pipeline and passing within the steps for use within the pipeline as arguments. After that, you possibly can run the outlined pipeline by way of the beginning operate. See the next code:

After this course of is full, an endpoint is created with the educated mannequin and is prepared to be used primarily based on the deep learning-based mannequin.

MLOps part 3: Actual-time inference with mannequin serving

Now let’s see tips on how to invoke the mannequin in actual time from the created endpoint, which can be accessed utilizing the SageMaker SDK. The next code is an instance of getting real-time inference values for enter values from an endpoint deployed by way of the invoke_endpoint operate. The options you go as arguments to the physique are handed as enter to the endpoint, which returns the inference ends in actual time.

Once we configured the inference operate, we had it return the gadgets within the order that the consumer is almost certainly to love among the many gadgets handed in. The previous instance returns gadgets from 1–25 so as of probability of being favored by the consumer at index 0.

We added enterprise logic to the function, configured it in Lambda, and linked it with an API gateway to implement the API’s capacity to return advisable gadgets in actual time. We then carried out efficiency testing of the net service. We load examined it with Locust utilizing 5 g4dn.2xlarge situations and located that it may very well be reliably served in an atmosphere with 1,000 TPS.

MLOps part 4: CI/CD construction

A CI/CD construction is a elementary a part of DevOps, and can also be an necessary a part of organizing an MLOps atmosphere. AWS CodeCommit, AWS CodeBuild, AWS CodeDeploy, and AWS CodePipeline collectively present all of the performance you want for CI/CD, from code shaping to deployment, construct, and batch administration. The providers are usually not solely linked to the identical code sequence, but in addition to different providers akin to GitHub and Jenkins, so when you have an present CI/CD construction, you should utilize them individually to fill within the gaps. Due to this fact, we expanded our CI/CD construction by linking solely the CodeBuild configuration described earlier to our present CI/CD pipeline.

We linked our SageMaker notebooks with GitLab for code administration, and after we have been accomplished, we replicated them to Amazon S3 by way of Jenkins. After that, we set the S3 path to the default repository path of the NCF CodeBuild venture as described earlier, in order that we might construct the venture with CodeBuild.

Conclusion

To this point, we’ve seen the end-to-end means of configuring an MLOps atmosphere utilizing AWS providers and offering real-time inference providers primarily based on deep studying fashions. By configuring an MLOps atmosphere, we’ve created a basis for offering high-quality providers primarily based on numerous algorithms to our clients. We’ve additionally created an atmosphere the place we are able to shortly proceed with prototype improvement and deployment. The NCF we developed with the prototyping algorithm was additionally capable of obtain good outcomes when it was put into service. Sooner or later, the MLOps platform may help us shortly develop and experiment with fashions that match LotteON knowledge to supply our clients with a progressively higher-quality suggestion expertise.

Utilizing SageMaker along side numerous AWS providers has given us many benefits in creating and working our providers. As mannequin builders, we didn’t have to fret about configuring the atmosphere settings for steadily used packages and deep learning-related frameworks as a result of the atmosphere settings have been configured for every library, and we felt that the connectivity and scalability between AWS providers utilizing AWS CLI instructions and associated SDKs have been nice. Moreover, as a service operator, it was good to trace and monitor the providers we have been working as a result of CloudWatch linked the logging and monitoring of every service.

You may as well try the NCF and MLOps configuration for hands-on follow on our GitHub repo (Korean).

We hope this put up will provide help to configure your MLOps atmosphere and supply real-time providers utilizing AWS providers.

Concerning the Authors

SeungBum Shim is an information engineer within the Lotte E-commerce Advice Platform Growth Workforce, accountable for discovering methods to make use of and enhance recommendation-related merchandise by way of LotteON knowledge evaluation, and creating MLOps pipelines and ML/DL suggestion fashions.

SeungBum Shim is an information engineer within the Lotte E-commerce Advice Platform Growth Workforce, accountable for discovering methods to make use of and enhance recommendation-related merchandise by way of LotteON knowledge evaluation, and creating MLOps pipelines and ML/DL suggestion fashions.

HyeKyung Yang is a analysis engineer within the Lotte E-commerce Advice Platform Growth Workforce and is answerable for creating ML/DL suggestion fashions by analyzing and using numerous knowledge and creating a dynamic A/B take a look at atmosphere.

HyeKyung Yang is a analysis engineer within the Lotte E-commerce Advice Platform Growth Workforce and is answerable for creating ML/DL suggestion fashions by analyzing and using numerous knowledge and creating a dynamic A/B take a look at atmosphere.

Jieun Lim is an information engineer within the Lotte E-commerce Advice Platform Growth Workforce and is answerable for working LotteON’s personalised suggestion system and creating personalised suggestion fashions and dynamic A/B take a look at environments.

Jieun Lim is an information engineer within the Lotte E-commerce Advice Platform Growth Workforce and is answerable for working LotteON’s personalised suggestion system and creating personalised suggestion fashions and dynamic A/B take a look at environments.

Jesam Kim is an AWS Options Architect and helps enterprise clients undertake and troubleshoot cloud applied sciences and offers architectural design and technical assist to handle their enterprise wants and challenges, particularly in AIML areas akin to suggestion providers and generative AI.

Jesam Kim is an AWS Options Architect and helps enterprise clients undertake and troubleshoot cloud applied sciences and offers architectural design and technical assist to handle their enterprise wants and challenges, particularly in AIML areas akin to suggestion providers and generative AI.

Gonsoo Moon is an AWS AI/ML Specialist Options Architect and offers AI/ML technical assist. His principal function is to collaborate with clients to resolve their AI/ML issues primarily based on numerous use circumstances and manufacturing expertise in AI/ML.

Gonsoo Moon is an AWS AI/ML Specialist Options Architect and offers AI/ML technical assist. His principal function is to collaborate with clients to resolve their AI/ML issues primarily based on numerous use circumstances and manufacturing expertise in AI/ML.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25601626/ad3527b123d0c8dfcca5d4968a6dcf126e37a68dd290ef85.jpg?w=120&resize=120,86&ssl=1)